Select Publications

1) Characterizing Emergent Intelligence

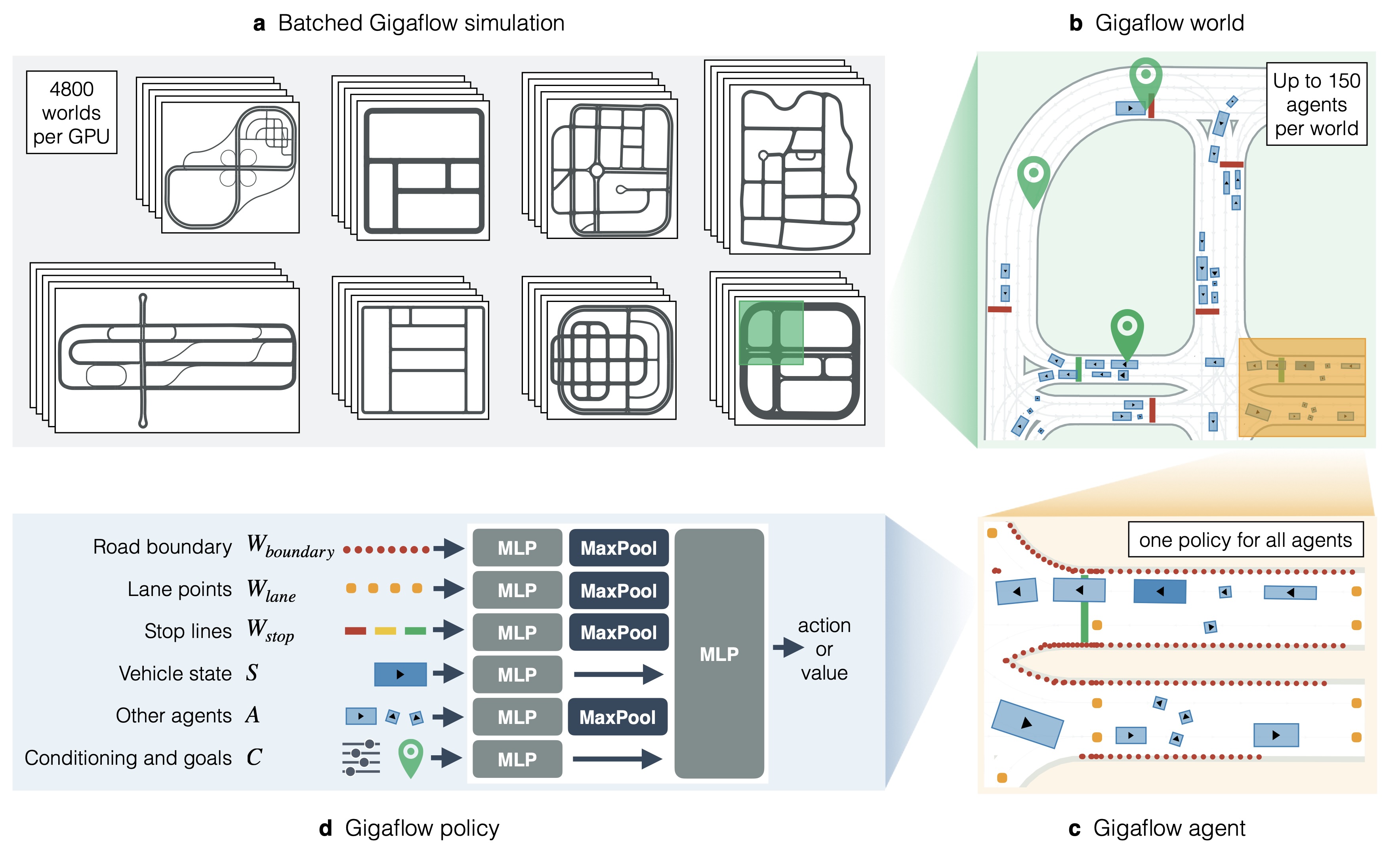

Robust Autonomy Emerges from Self-Play

Acceptance: 3,260 out of 12,107 submission = 26.9%

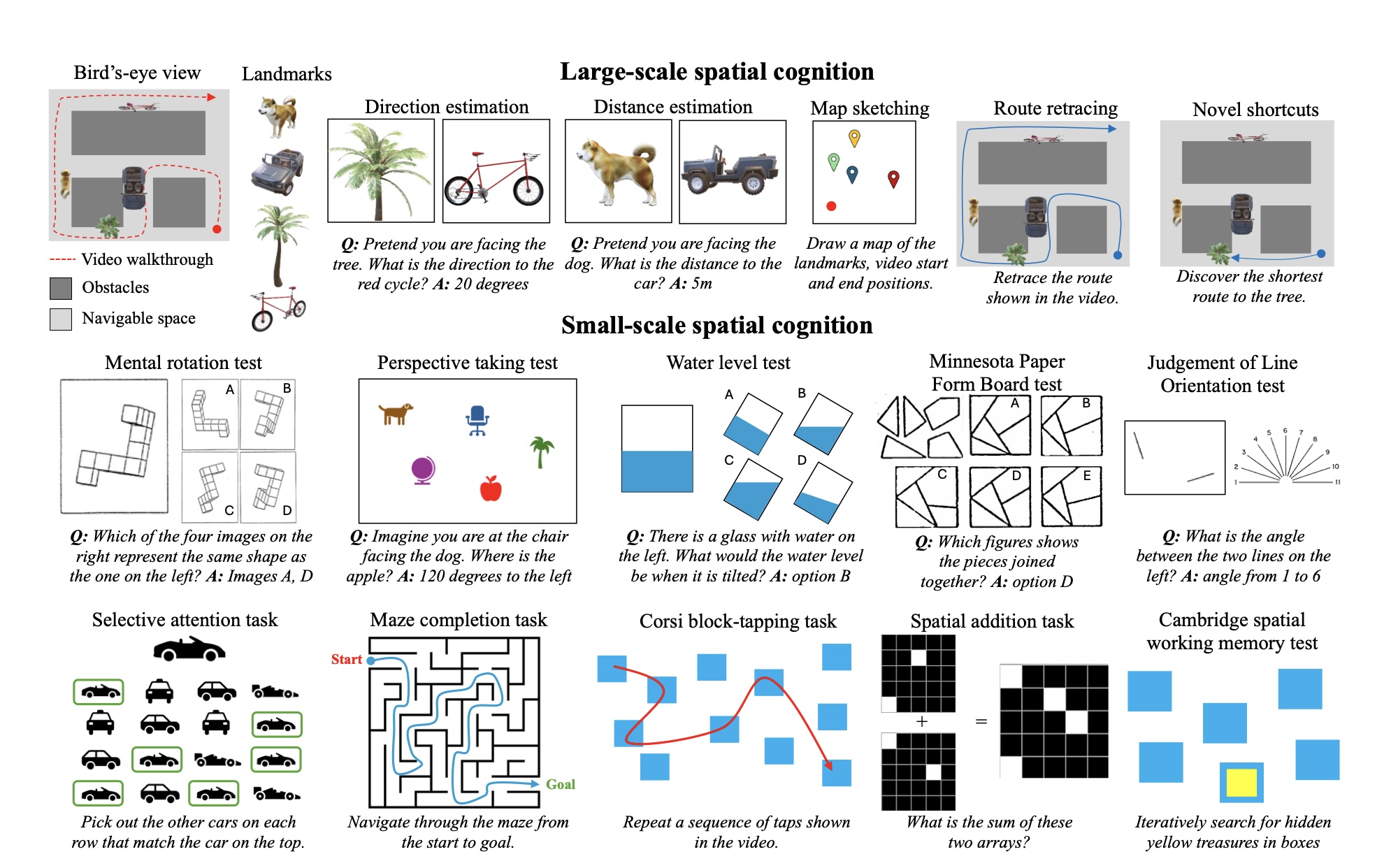

Does Spatial Cognition Emerge in Frontier Models?

Acceptance: 3,704 out of 11,672 submissions = 31.7%

Emergence of Maps in the Memories of Blind Navigation Agents

2) Large-scale Machine Learning

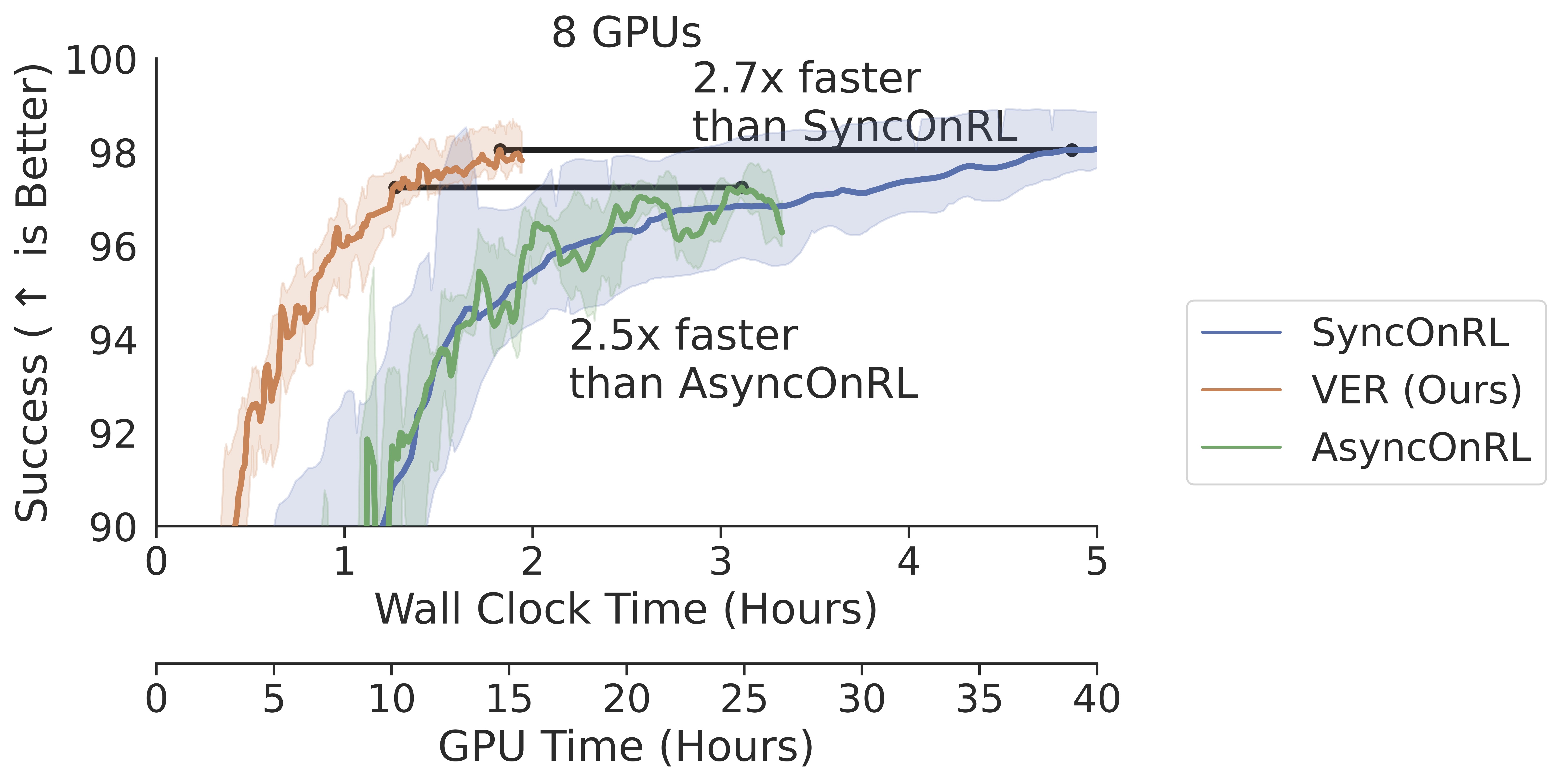

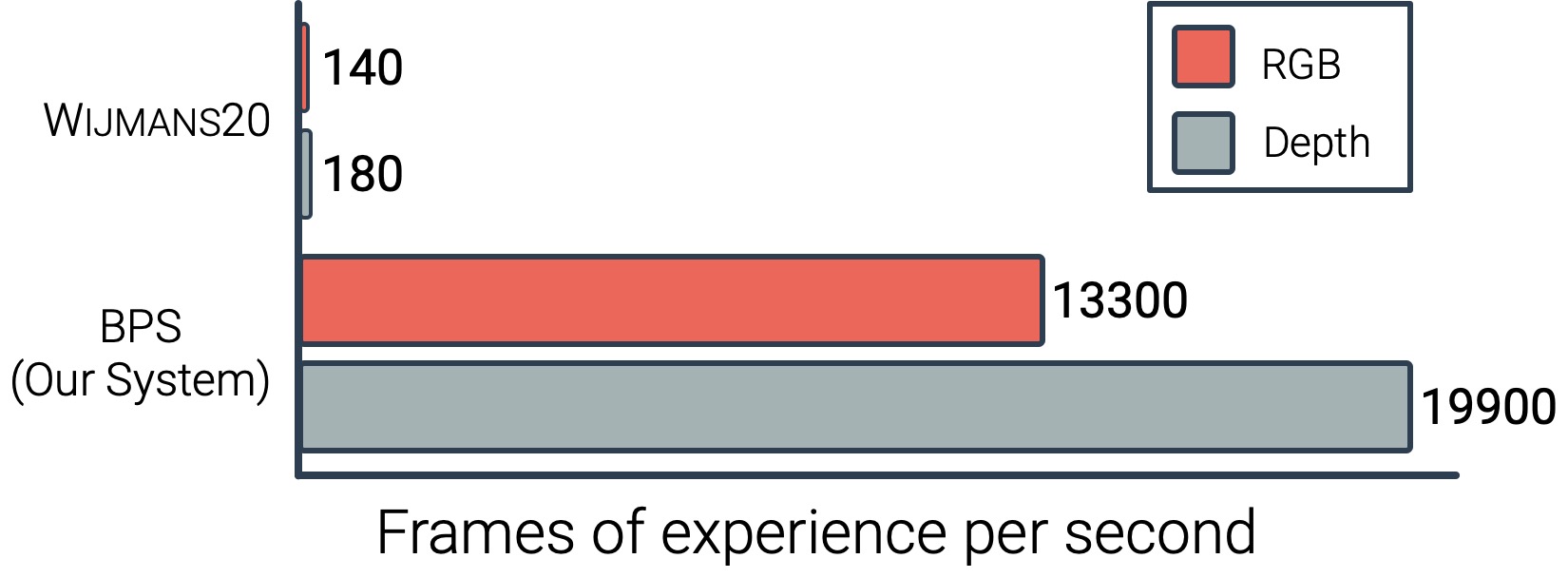

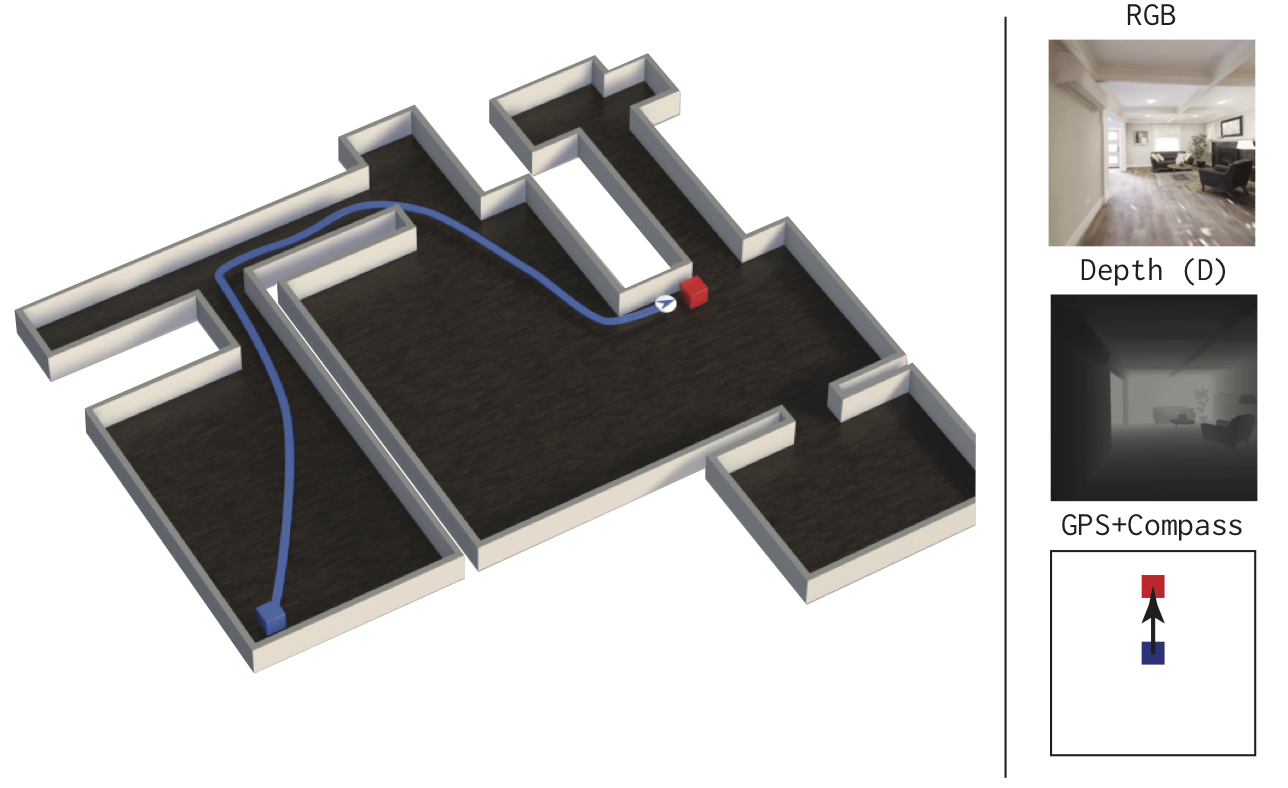

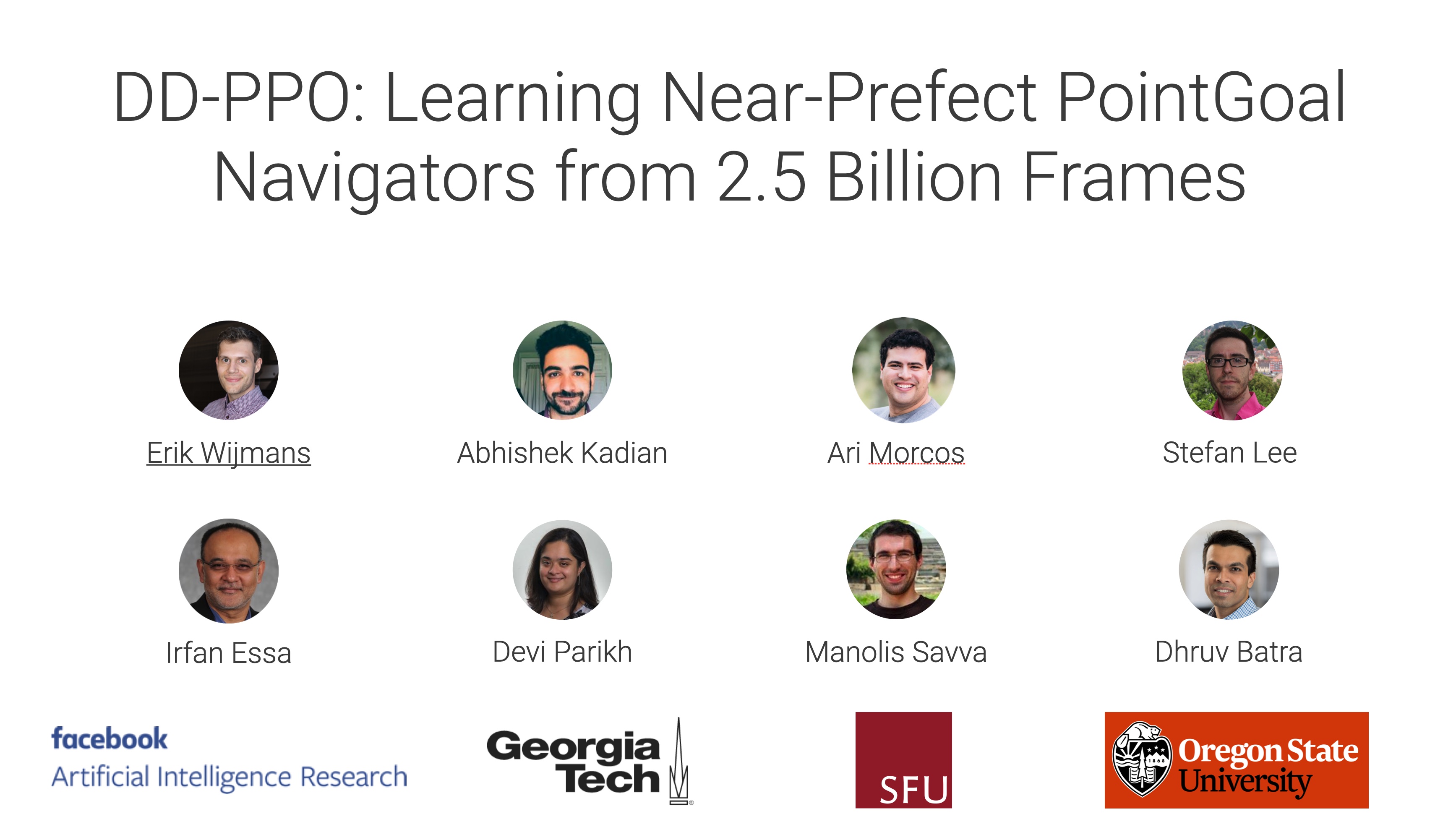

DD-PPO: Learning Near-Perfect PointGoal Navigators from 2.5 Billion Frames

687 out of 2594 submissions = 26.5%

3) 3D Datasets and Fast Simulation for Embodied AI and Robotics

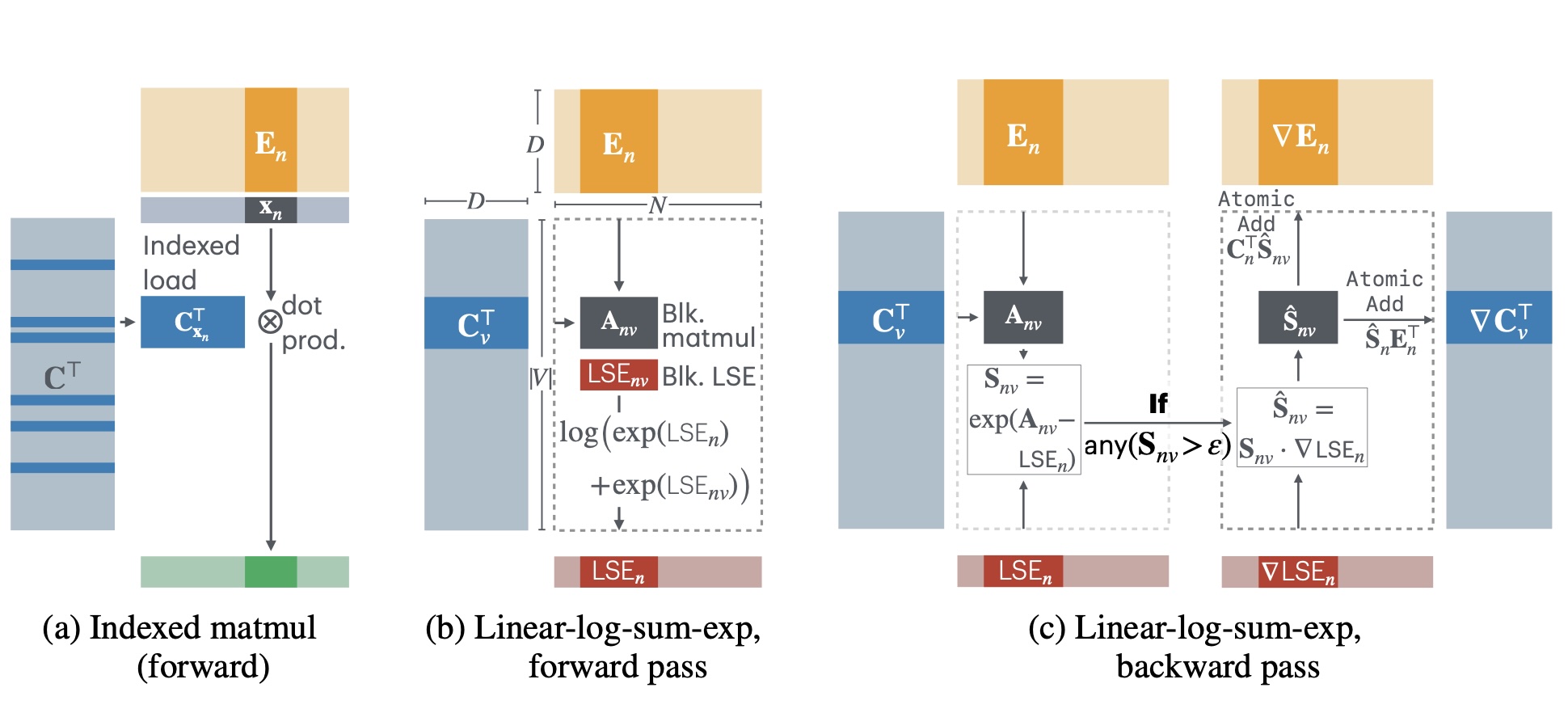

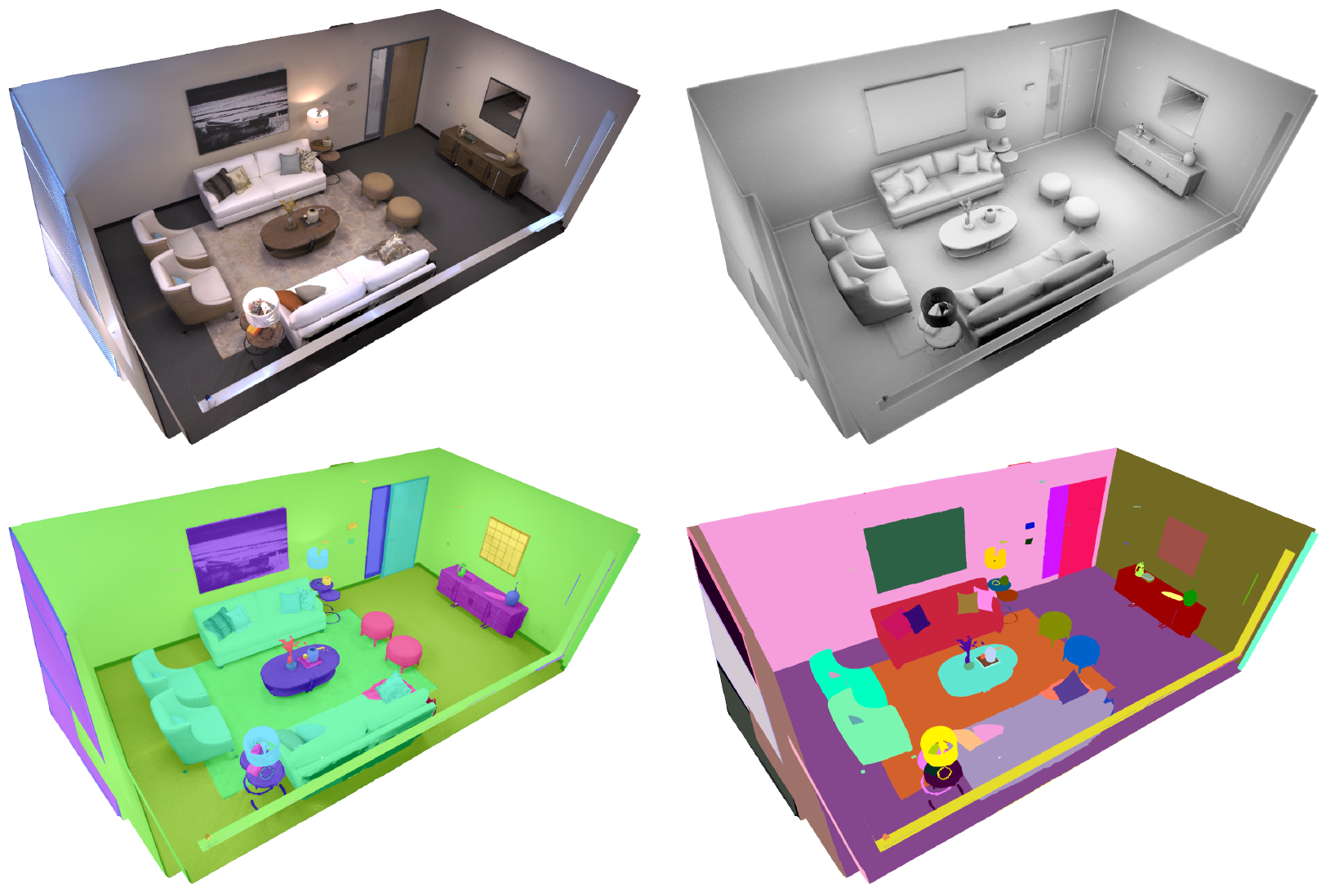

Habitat 2.0: Training Home Assistants to Rearrange their Habitat

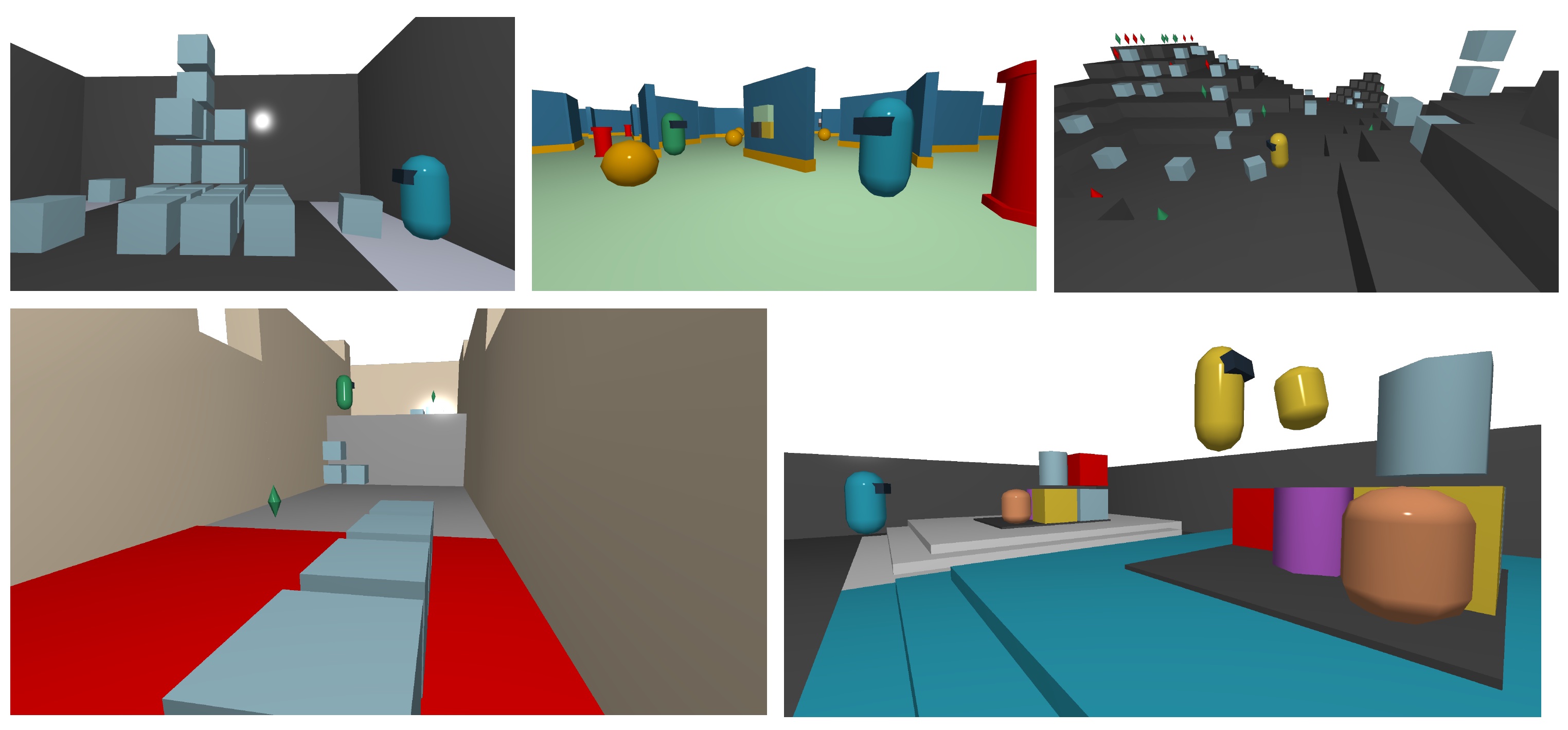

Megaverse: Simulating Embodied Agents at One Million Experiences per Second

1184 out of 5513 submissions = 21%